Specialist AI vs Generic AI: Which Actually Works for Planning Objections?

We benchmarked Objector against generic AI using real UK planning applications. Discover why accuracy matters — and why generic AI misses 1 in 3 critical issues.

AI tools are increasingly being used to generate planning objections. From homeowners to planning consultants, the appeal is obvious — faster analysis, lower cost, and instant outputs.

But there’s a critical question - Do these tools identify the issues that actually influence planning decisions?

To answer this, we benchmarked Objector — a specialist AI built for the UK planning system — against generic AI tools using real planning applications and officer reports.

The results highlight a clear gap in performance — especially where it matters most.

Benchmarking AI Against Real Planning Decisions

Most AI comparisons rely on:

synthetic prompts

subjective scoring

or simplified test cases

This benchmark uses a different approach.

Each tool was tested against real planning applications and evaluated against the grounds identified by planning officers in official reports.

This means the test measures:

decision relevance

policy accuracy

technical understanding

—not just whether the output “sounds right.”

Key Results

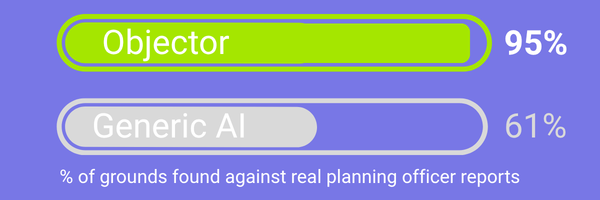

A 34% point accuracy gap

Objector identified 35 of 36 decision-making grounds

Generic AI identified 25 of 36

Generic AI missed 1 in 3 material objection grounds

Scope: Tested against 7 real planning applications across all 4 UK jurisdictions

Why Generic AI Falls Short in Planning

Generic AI models are designed to be broad and flexible. They can summarise documents and raise general concerns — but planning decisions require more than that.

A valid planning objection must:

reference specific policy

apply the correct legal framework

identify technical deficiencies

In this benchmark, generic AI consistently underperformed in these areas.

Where Generic AI Struggles

Generic AI was weakest in:

Heritage and statutory duties

Noise assessment methodology (e.g. BS4142)

Biodiversity and ecology requirements

Jurisdiction-specific policy frameworks

These are often the most critical factors in planning decisions

A Better Alternative to Mass-Generated Objections

One of the unintended consequences of generic AI is the rise of mass-produced planning objections.

It’s now possible to generate hundreds of near-identical letters in minutes. While this may feel like strengthening opposition, in practice it can have the opposite effect.

Planning officers are required to assess:

material planning considerations

not the number of submissions

Large volumes of repetitive or low-quality objections can:

add administrative burden

dilute stronger, evidence-based points

reduce the overall effectiveness of submissions

In planning, quality carries more weight than quantity

Pooling Resources: A More Effective Approach

Instead of flooding the system with duplicated objections, Objector supports a different model:

Crowdfunded, policy-backed objections built by the community

This approach allows individuals to:

contribute to a single, high-quality objection

ensure it is professionally structured and policy-based

avoid duplication and inconsistency

By pooling resources, communities can:

focus effort where it matters

produce stronger, more credible submissions

reduce noise in the planning process

The Difference: General Concerns vs Decision-Grade Grounds

One of the clearest findings was the difference between:

general observations

and policy-backed planning grounds

For example:

Generic AI might say:

“The development could impact nearby residents due to noise.”A valid planning ground requires:

reference to specific standards

identification of missing methodology

linkage to policy requirements

Planning decisions are based on evidence and policy — not general concerns

The Real Gap

Generic AI misses 1 in 3 critical planning issues

Even when it raises relevant topics, it often fails to:

apply the correct policy

assess technical compliance

identify decision-level issues

Why Specialist AI Performs Better

Objector is not a general-purpose model. It uses three advanced AI models and cross-validates the findings to ensure greater accuracy. It has been trained specifically on UK planning policy and developed in partnership with parish councils.

It is built specifically for the planning system using:

jurisdiction-aware policy frameworks

structured ground identification

specialist evaluation models

These include analysis of:

transport and highways

noise and amenity

ecology and biodiversity

flood risk and drainage

This allows it to identify not just what might be wrong, but what matters in a planning decision

Why This Matters for Planning Objections

Submitting a planning objection is not just about raising concerns.

It’s about:

identifying material planning considerations

aligning with policy and guidance

presenting issues in a way that decision-makers must consider

Missing key grounds can mean:

weaker objections

reduced credibility

or no impact on the outcome

Can Generic AI Still Be Useful?

Yes — but with limitations.

Generic AI can help with:

summarising documents

drafting initial text

highlighting obvious issues

However, it should not be relied on for:

identifying all relevant planning grounds

applying policy correctly

evaluating technical reports

Conclusion: When Accuracy Matters, Specialisation Wins

AI is transforming how planning objections are created.

But not all AI is equal.

Planning decisions depend on precise, policy-backed reasoning — not generalised outputs.

This benchmark shows that specialist AI significantly outperforms generic tools when evaluated against real-world decisions.

For users submitting planning objections, that difference can be critical.